Putting the Neural back into Networks

Part 1: Why spikes?

11th January, 2020A rock pool

A rock pool

A rock pool

A rock pool

A Python package I'm working on combines several submodules with mixed licensing — some will be open source and redistributed, others will be proprietary and in-house only. I wanted to ease importing of the package by automatically detecting which submodules are present, and dynamically importing only those.

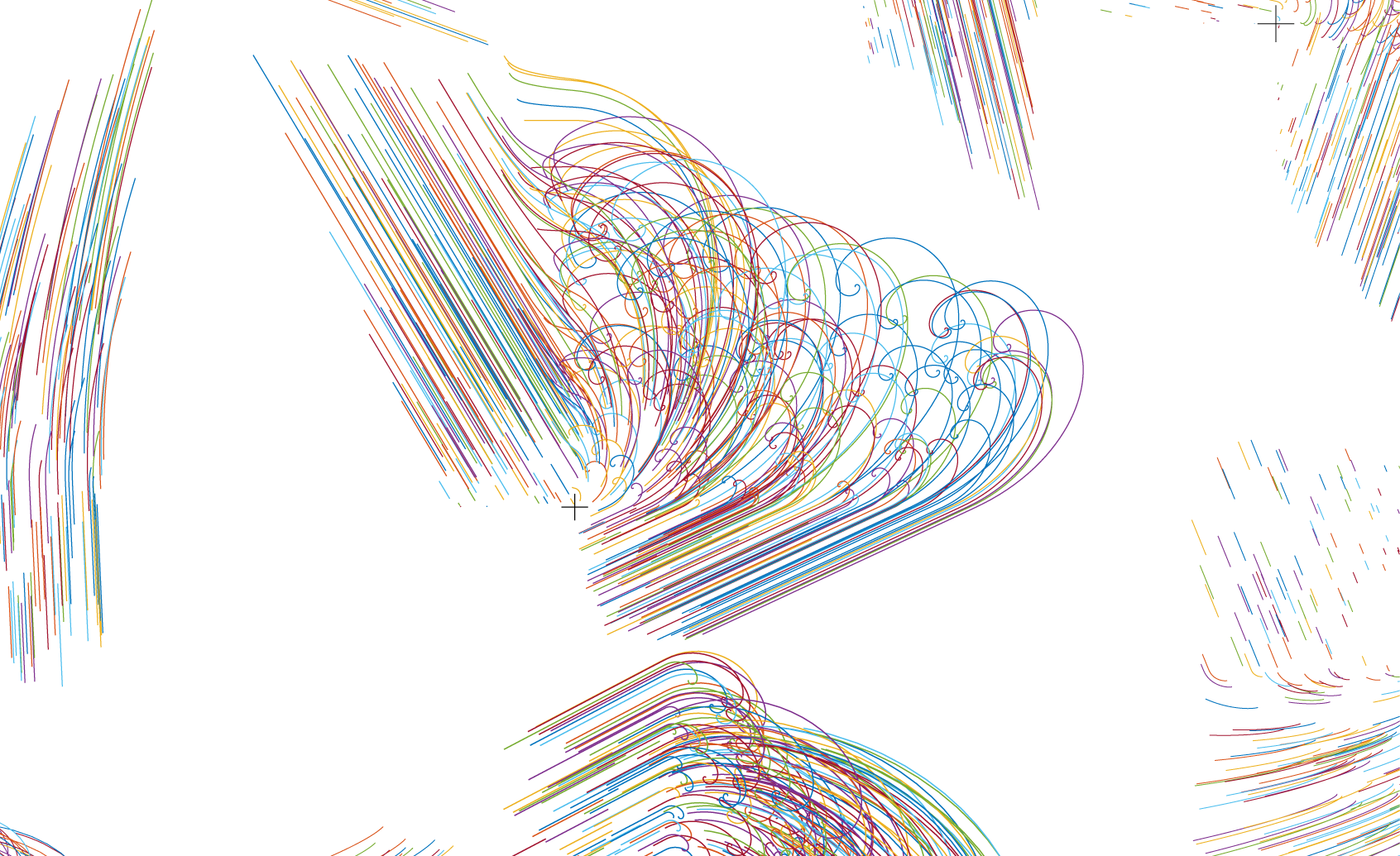

The dynamics of simple nonlinear systems can be dramatically beautiful. I visualised a large number of randomly-generated systems as an exploration.

Seeking a minimum

Seeking a minimum

Stochastic gradient descent is a powerful tool for optimisation, which relies on estimation of gradients over small, randomly-selected batches of data. This approach is efficient (since gradients only need to be evaluated over few data points at a time) and uses the noise inherent in the stochastic gradient estimates to help get around local minima. This is a Matlab implementation of a recent powerful SGD algorithm.

Our brain does not faithfully interpret visual information, but instead uses a complex mixture of prior experience and context to shape our perception of the world. We described a pathway in the brain for this contextual information, from the mouse thalamus to visual cortex.